Data Generation, Collection, and Feature Engineering

AI/ML Data StageTo build a realistic anomaly detection workflow, the data was not treated as an abstract benchmark. Instead, it was generated and collected from a practical SDR-based 5G environment. The setup included a machine running the 5G core and gNB stack, a separate machine acting as the UE-side receiver and analysis node, and a jammer machine used to inject controllable attack scenarios. This made it possible to observe both normal RF behavior and attack-driven spectral deviations under realistic over-the-air conditions.

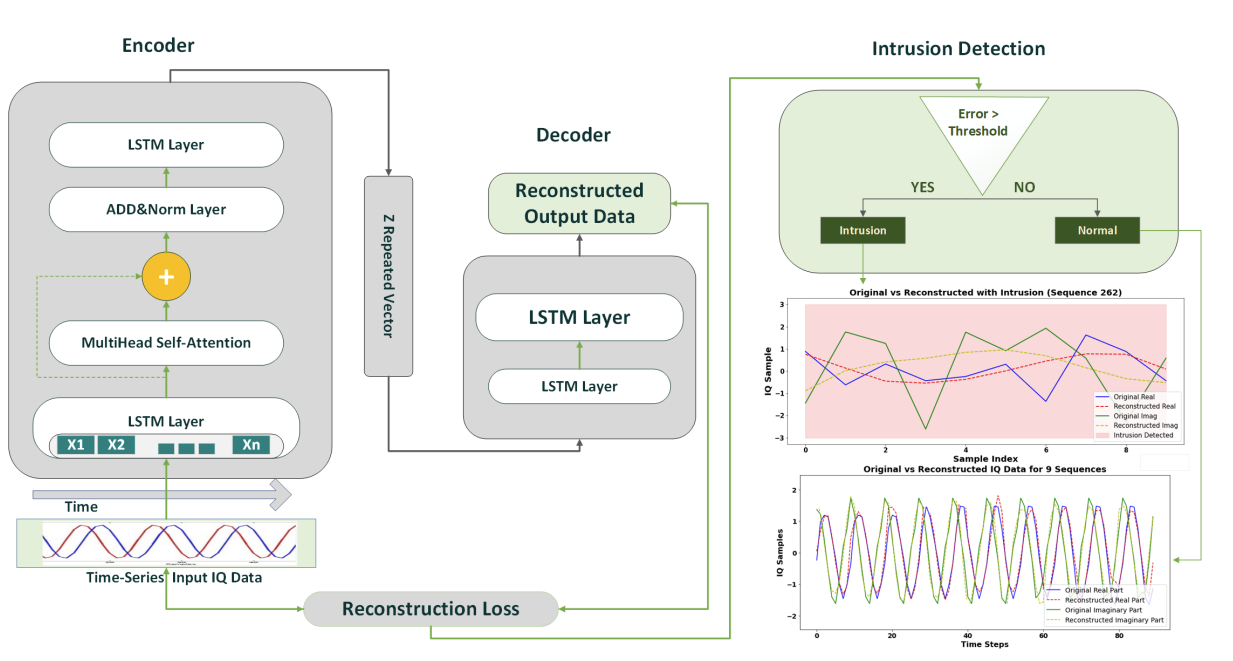

From the collected I/Q streams, the data engineering stage focused on transforming raw signal captures into model-ready sequential inputs. This included windowing, sequence construction, normalization, and organization of temporal slices for training and inference. In other words, this stage plays the role of feature engineering for time-series RF learning, where the objective is to preserve temporal behavior while making the sequences consistent and learnable for the encoder-decoder model.

Experimental components

- Machine 1: srsRAN gNB + 5G core stack

- Machine 2: UE-side reception and analysis workflow

- Machine 3: jammer generation with GNURadio

- RF hardware: USRP / SDR front-end devices

- Captured data: waveform-level I/Q streams

Feature engineering steps

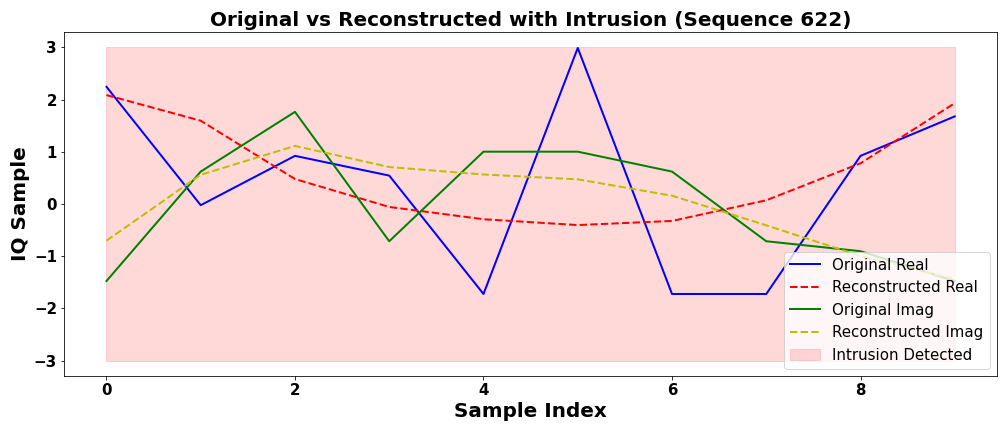

- Signal collection: normal and jammer-affected RF activity

- Temporal slicing: conversion of raw samples into fixed sequences

- Preprocessing: normalization and batching for training stability

- Labeling logic: normal vs intrusion intervals for evaluation

- Objective: preserve temporal structure for sequence modeling